Yesterday, the President of IQS Research Shawn Herbig spent an hour on the radio discussing some of the intricacies involved in the research and polling process. Given the current election season, one thing we know for certain is that there is no shortage of polling results being released.

So that begs the question, how do we know which polls are right and which are not? Is each new poll released on a daily basis reflecting real changes in how we think about the candidates? Is polling and research indicative of emotions or behaviors, or both? These are some the things Herbig tackled yesterday.

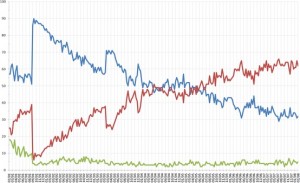

We posted a discussion late last year about how it may be a good idea to look at what are called polls of polls, which take into consideration the summation of research done on a particular topic (in this case, political polling). This will help to “weed out” fluff polls that may not be very accurate, and to place a heavier emphasis on the trend rather than specific points in time.

But beyond this, understanding the the methodology behind polls is useful when deciding whether or not those results are reliable. A few things to note:

1. What is the sample size? – Political polls in particular are attempting to gauge what an entire country of over 200 million registered voters think about an election. A sample size needs to be 385 to be representative of a population of 200 million. But oftentimes you see polls with around 1,000 respondents. Oversampling allows researchers to make cuts in the data (say, what women think , or what what African Americans think) and still maintain a comfortable confidence level in the results.

2. How was the sample collected? – Polls on the internet, or ones that are done on media websites, aren’t too trustworthy. They attract a particular group of respondents, thus skewing the results one way or another. Scientific research maintains that a sample must be collected randomly in order for those results to be Representative in a population. In other words, each person selected for a political poll, for instance, must have an equal chance to be selected as any other person in the population.

3. Understand the context of poll/research – When the poll was taken is crucial in understanding what it is telling us. For instance, there was a lot of polling done after each one of the presidential debates. Not only did researchers ask who won the debate, but they also asked who those being polled were going to vote for. After the first debate (which we could argue went in Romney’s favor), most polls showed the lead Obama had going into the debate had vanished. Several polls showed Romney with a sizable lead. But was this a statistical push due to the recent debate and the emotion surrounding it? Or was this increase real?

Recent polls show a leveling between the two candidates now that the debates are over, and a more objective look at the candidates can be achieved. However, it is nearly impossible to eliminate emotion in responses, especially in a context as controversial a politics.

4. Interpreting Results – Interpretation ties in nicely with understanding the context of the research that you are viewing. But there is a task for each of us as we interpret, and that is to leave behind our preconceived notions about the results. This is very hard to do, as it is a natural human instinct to believe what justifies our own reasoning. This is know as Confirmation Bias, and it can impact the way we accept or discount the research.

Taking all this into account can help us to sift through the commotion and find the value of the research being produced. This isn’t just for political polling, but can be used for all research that you encounter. Being good consumers of research can take a lot of effort, but it is the only way to gain a more realistic view of the world around you.